About me

I am now a first-year fall P.hd student in HKU EEE, IoT lab supervised by Prof Edith C. H. Ngai. I attended Sun Yat-sen University, where I earned my Bachelor of Engineering (B.E.) and Master of Engineering (M.E.) degrees in Transportation Engineering from 2018 to 2022 and 2022 to 2025, respectively, under the supervision of Prof. Wei Wang.

My research interests include multi-modal learning, affective computing, AI for healthcare, and intelligent transportation systems (spatial-temporal data prediction). I always welcome the opportunity for research collaboration, and I’m eager to explore potential research ideas. My CV can be found here:CV.

If you’re interested, please feel free to drop me an email (cccccj03@gmail.com).

News

❗️❗️2026.01.17 Two papers are accepted by ICASSP 2026. One for open-vocabulary multi-modal emotion recognition(co-first author) and one for ECG-based emotion recognition (second author).

❗️❗️2026.01.16 One paper about ECG signal self-supervised representation learning was accepted by WWW 2026 Web4good (first student author).

❗️❗️2025.11.08 One paper about Sticker Response Selection based on Emotion and Intention Guided Multi-Modal Learning was accepted by AAAI 2026!!

❗️❗️2025.07.07 One paper about Sticker Emotion Recognition inspired by the human ability to interpret sticker emotions from multiple views with MLLMs was accepted by ACM MM 2025!!

❗️❗️2025.04.29 One paper about ECG-Report Representation Learning was accepted by IJCAI 2025!!

❗️❗️2024.07.16 One paper about Sticker Emotion Recognition was accepted by ACM MM 2024!!

Selected Publication

AI for Healthcare

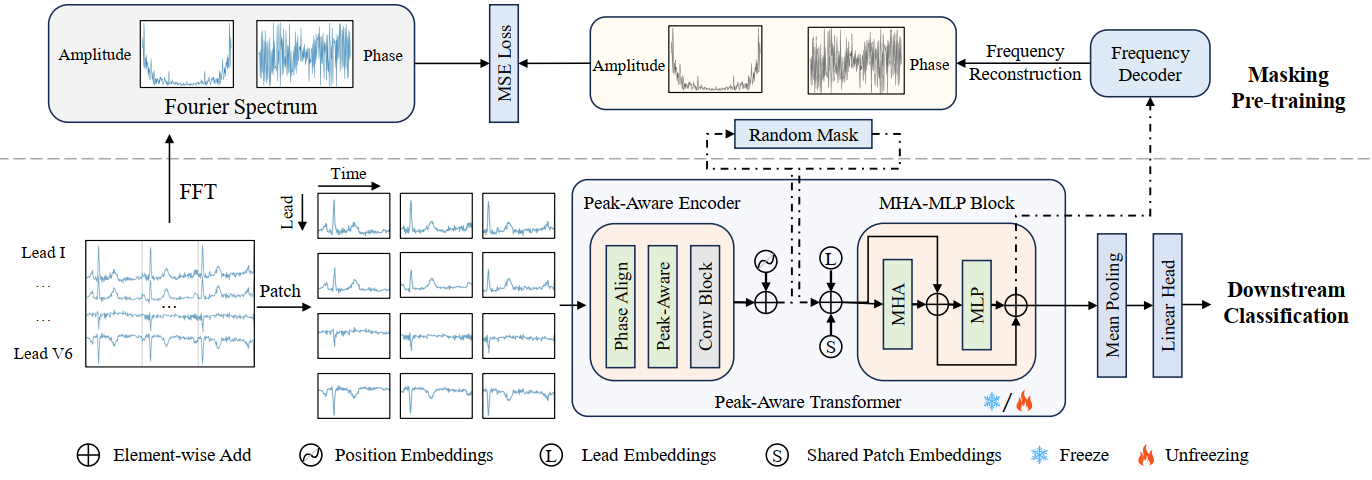

| Sustainable and Responsible ECG-Based AI Diagnostics: Masked Frequency Reconstruction with Peak-Aware Transformers, WWW 2026 Web4Good 👉 [Code] / [Paper]. |

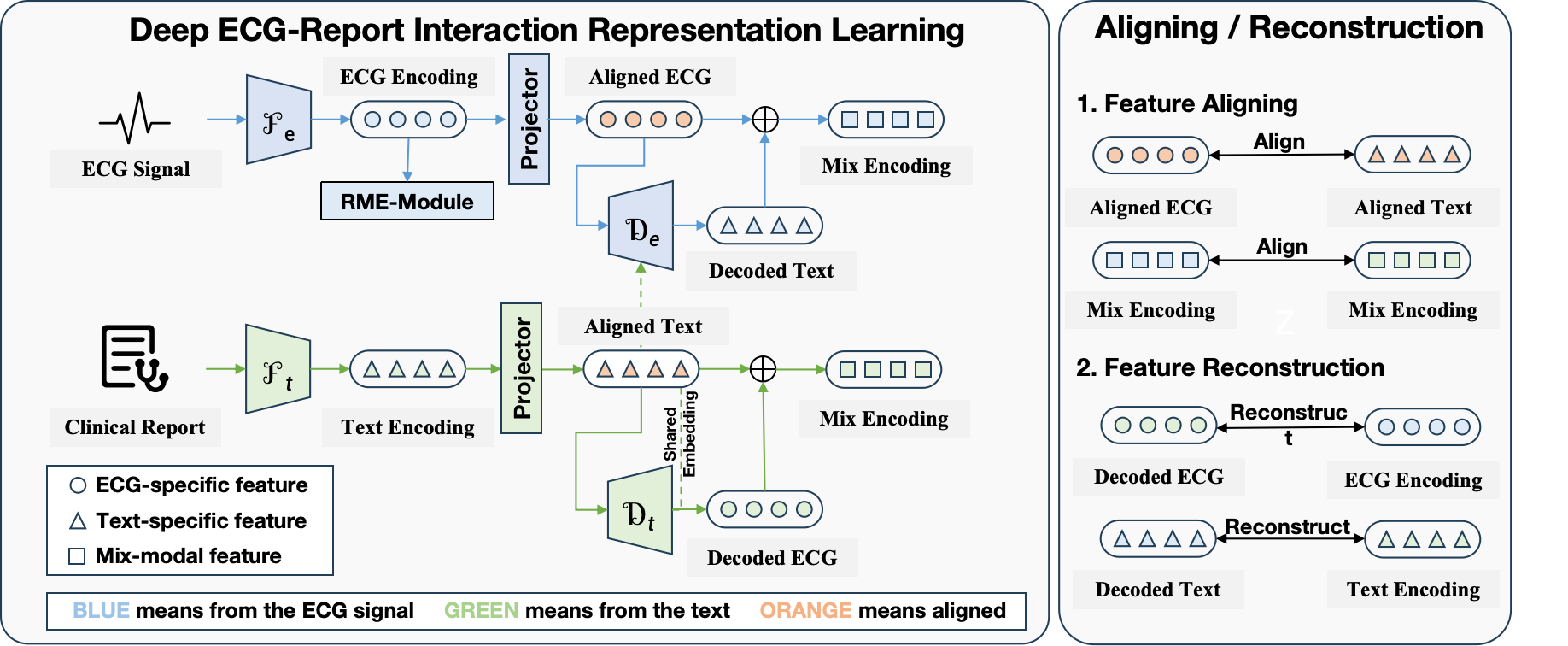

| DERI: Cross-Modal ECG Representation Learning with Deep ECG-Report Interaction, IJCAI 2025 (Oral) 👉 [Code] / [Paper](preprint) |

Affective Computing

Clue2Emo: A Brain-Inspired Framework for Open-Vocabulary Multimodal Emotion Recognition, ICASSP 2026. The paper will be preprinted soon.

E-ECGMIL: ECG-based Emotion Recognition via Time-Aware Multiple Instance Learning, ICASSP 2026. The paper will be preprinted soon.

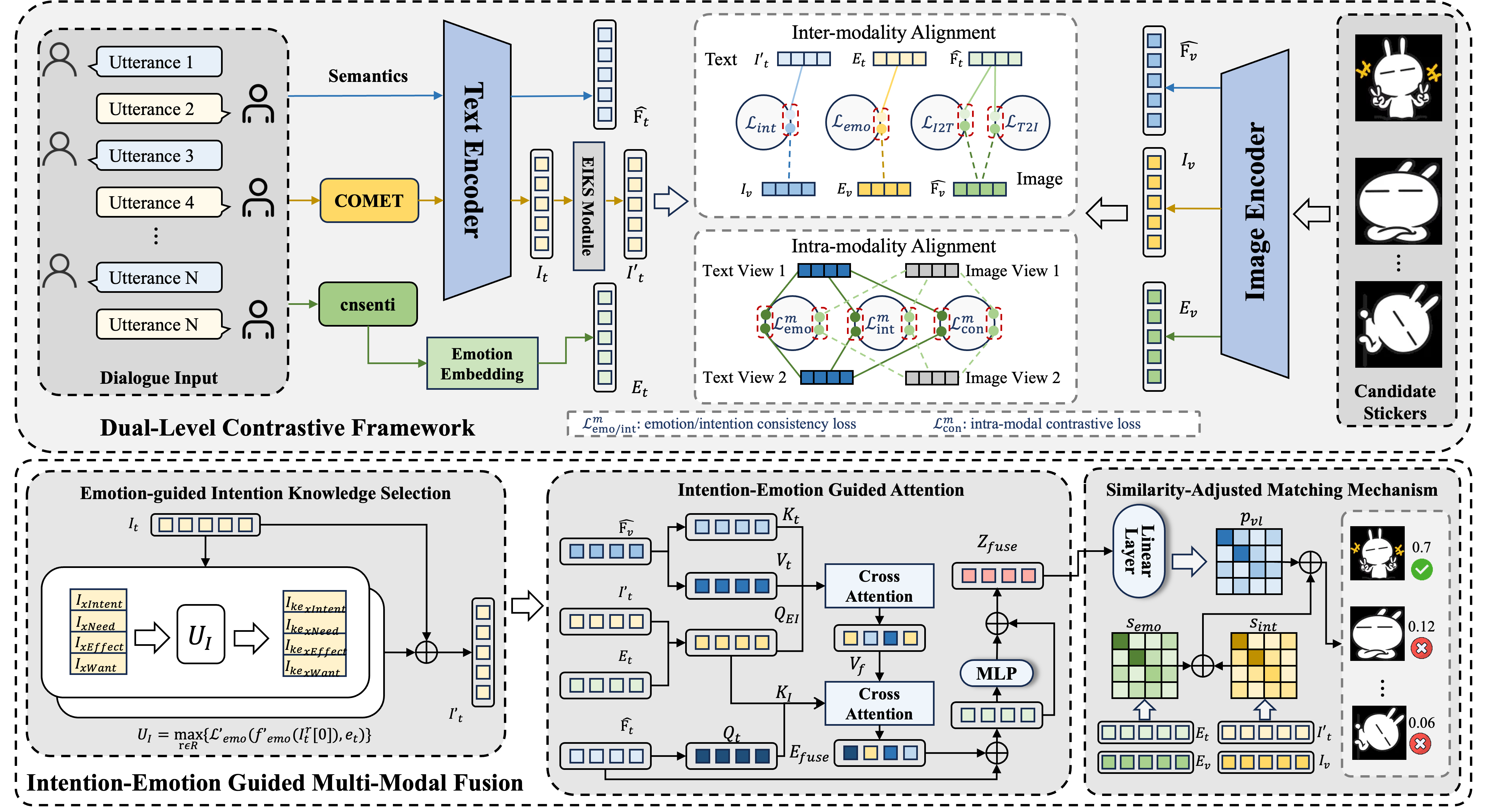

| Emotion and Intention Guided Multi-Modal Learning for Sticker Response Selection, AAAI 2026 (Poster) 👉 [Code]/ [Paper] |

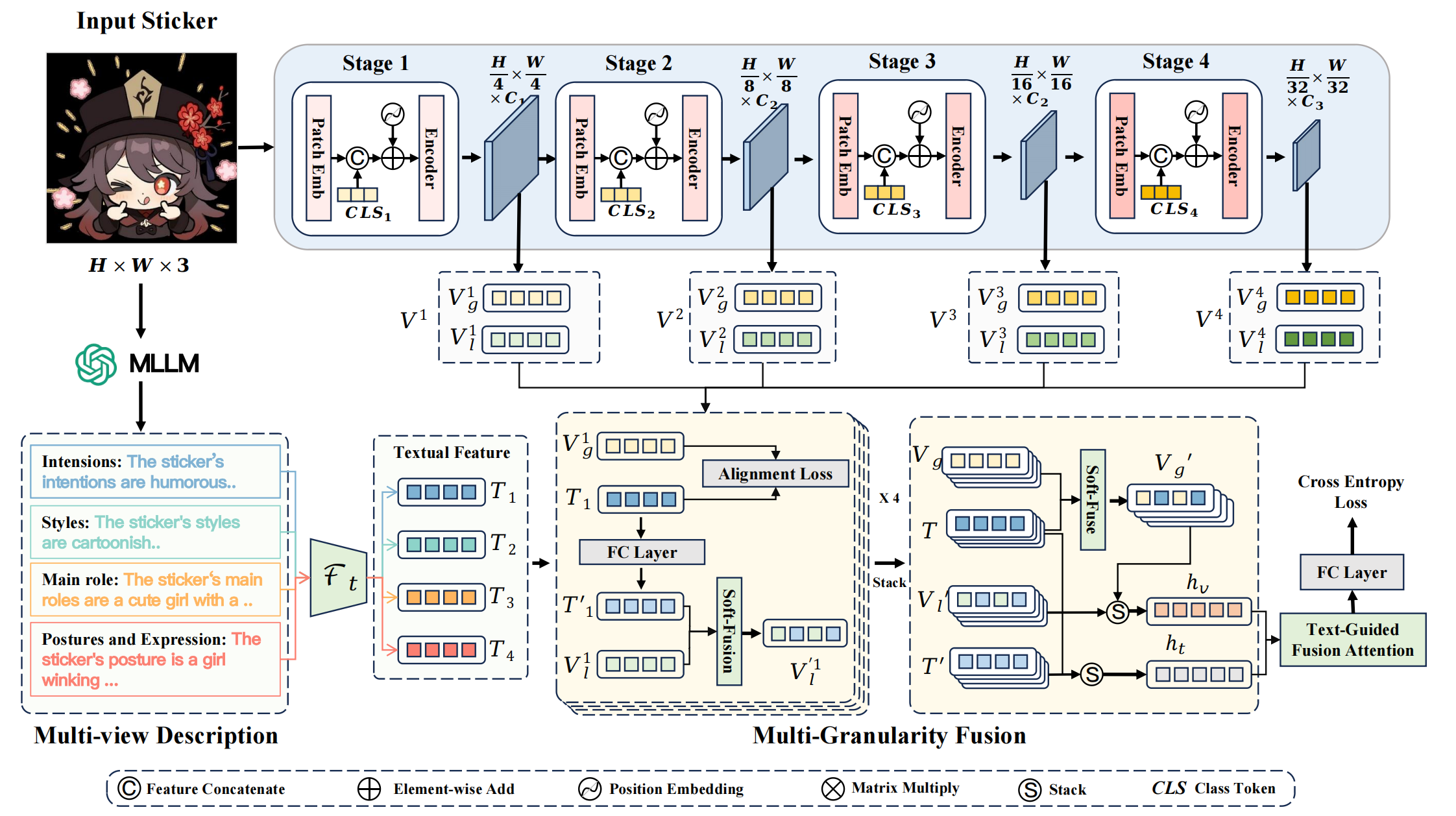

| MGHFT: Multi-Granularity Hierarchical Fusion Transformer for Cross-Modal Sticker Emotion Recognition, ACM MM 2025 (Oral) 👉 [Code]/ [Paper] |

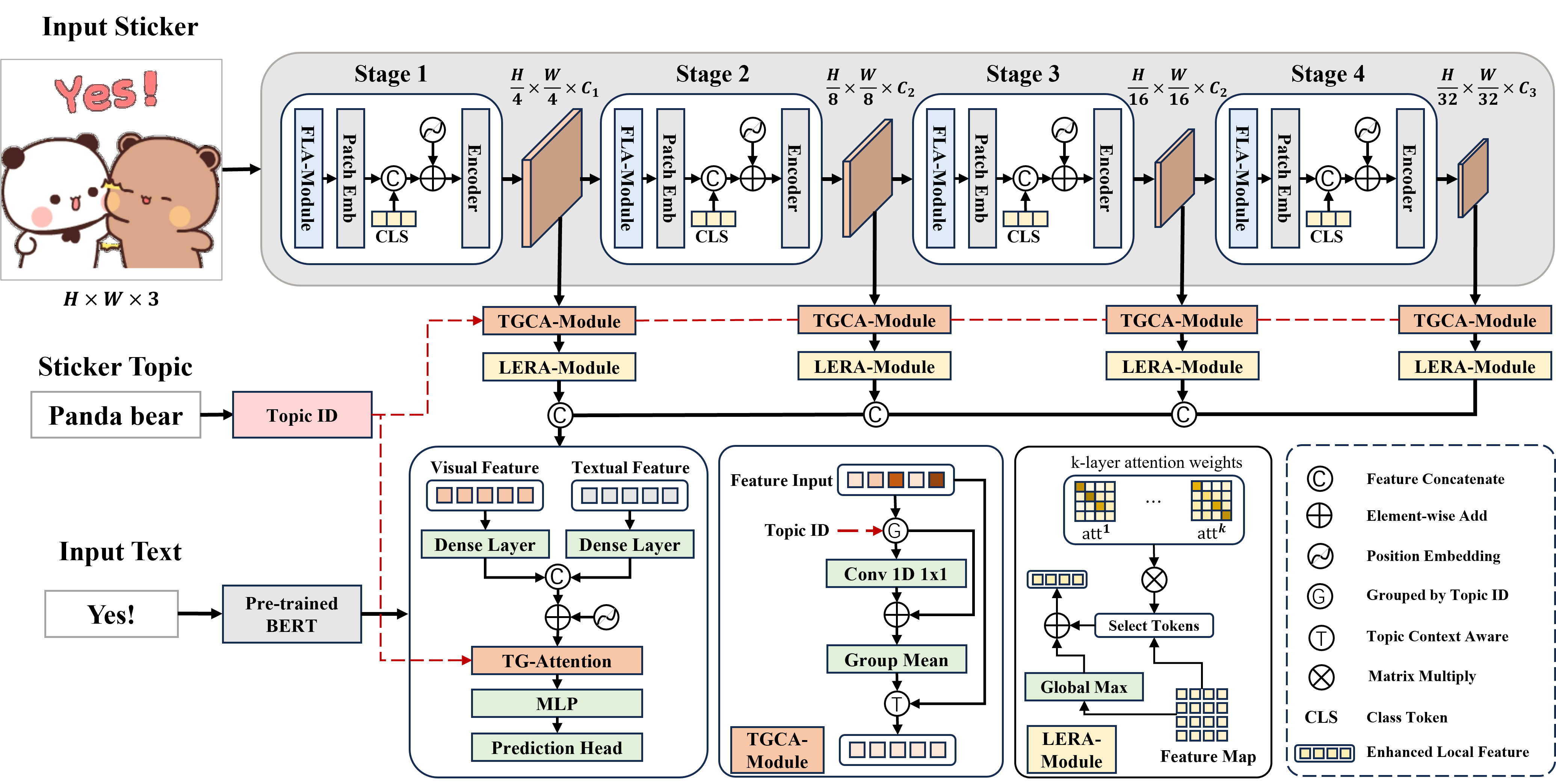

| TGCA-PVT: Topic-Guided Context-Aware Pyramid Vision Transformer for Sticker Emotion Recognition, ACM MM 2024 (Poster) 👉 [Code] / [Paper] |